In this post, Anand Kumar Jha, one of the researchers who received the Social Media Research grant for 2014, introduces his proposed work.

Motivation

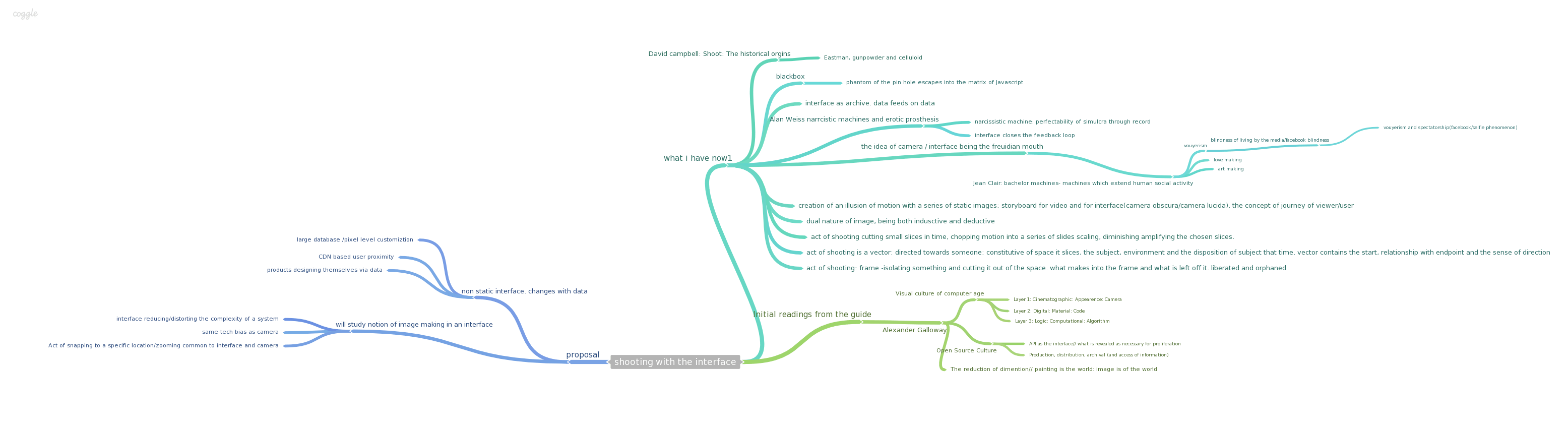

See the image in full size.

It was during a brief vacation in March that I started reading about the technological footprints of big data. Interfaces have collected data from the users for years for purely functional and legal reasons. I remember filling a long page of questions to get my first email account in 1999 in a dingy cybercafé in Paharganj. I have changed mail ids since then and forms have become shorter, they auto populate relevant text and autocorrect any mistakes, textual or logical. Every interaction is a movement in a virtual time and space, a spectacle for an eye. Actions, behaviors and events in physical time and space create subjects for the camera. Camera exercises its discretion, locates a specific frame of interest and cuts it out of the flux of the event. It creates an image. The image is then re-contextualized to create a simulacra [1]. A simulacra that stimulates actions behaviors and events in the real time and space.

I could see this being played out in in the virtual space. Users access the web through the interface generating an event, captured as a log in the server. Millions of these events get parsed through filters to be analyzed on dashboards, another interface. Dashboards then optimize the user facing interface to streamline and increase the number of events happening. Having worked in the interface and big data for a while, I was interested to find out if there were more ways in which an interface mimics the camera.

Therefore the proposal.

Research Questions (Re-Interpreted from the Proposal)

1. 1. How does the interface which, by design carries the same technological bias as that of camera, make an image — the relationship between the viewer, the viewed and the mediator.

The camera and the interface both exhibit a black box character. People who use them know very little about how they work. That black box ceases to exist after a point in the interaction with a human. What remains is a very simplified/ distorted over the hood personality of the product which exhibits a willing slave like behavior, rarely giving any idea of its intelligence or intent it possesses. This research question looks at the relationship of the interface-camera with the user, bringing forth the question of agency and intent.

2. How does the interface which, by design carries the same technological bias as that of camera, make an image — the mechanics of the black box

Another area that draws a parallel from the camera is the act of snapping to a desired location in the frame, to travel a physical distance by shrinking the optical scope of the frame. This action is invisible in an interface. Since the viewer/end user is only a consumer of this image , the act of snapping to a particular content is pre-meditated often with the help of cookies that parse user preference and behavioral data and often with collective filtering algorithms which take user to a specific place in the larger grid that “should be” relevant to him/her. The opening up of the camera and the opening up of the interface would reveal the components and their relationship with each other and the politics behind their being.

What Happens When?

The engagement is to be divided into three stages: Secondary research, Primary research and the last being Analysis and Presentation. Secondary research will entail studying available state of art, specifically text around interface and image making, technological aspects of back end frameworks in big data, predominantly in e commerce and social networking space (mapreduce, hive, hadoop etc). This activity is planned for duration of two months from the date of commencement of fellowship. Before the second stage begins the scope of the study would have narrowed and research questions fine-tuned to gain the required depth in the enquiry. This stage, Primary research would involve open ended discussions with data scientists, big data architects and designers around the areas identified at the end of secondary research. This activity including recruiting the participants, making the interview protocol, data collection and the high level analysis is scheduled to take two months. There would be a transitional overlap with the last activity which involves, compiling the findings and present them through presentation, report and installations. The last activity would need two months and would be the last activity of the engagement.

Mindmap

See the mindmap in full size [2].

References

[1] Baudrillard, Jean. 1995. Simulacra and Simulation. University of Michigan Press.

[2] The interactive version of the mindmap can be accessed from here: https://coggle.it/diagram/5375091e1708f8fd0701c105/7cc83c7599ecd9d25745d4a79011f2f27361be7a4bff717051e23fe55929cc25.